FITIZENS | The AI Fitness Coach

AI-powered video coach that analyzes exercise technique. Built from scratch by a two-person team. Published on App Store and Play Store.

We founded FITIZENS in 2022 with one goal: build an AI coach that could watch you exercise and tell you what to fix. Not a rep counter. Not a pose overlay. A coach that understands biomechanics, spots technique issues, and explains them in plain language.

Over four years, we built the entire product from scratch. Two co-founders, no external funding. The mobile app, the AI video analysis pipeline, the serverless backend, the data infrastructure, the sensor fusion firmware, the exercise detection algorithms. All built in-house.

Two Pivots, Two Rebuilds

First Pivot: B2B to B2C

We started building a gym management tool with Bluetooth IMU sensors attached to equipment. Gyms were not interested in adopting hardware. We pivoted to a consumer app that athletes could use on their own.

Second Pivot: Sensors to Camera

We had spent over a year building C and C++ libraries for sensor fusion and exercise detection, plus the Bluetooth integration, plus the hardware procurement pipeline. Then we decided to bet on LLM-based video analysis instead. Sensors gave us precise, real-time data. Camera-based AI gave us accessibility: no extra hardware, no setup, just a phone. Different trade-offs, but accessibility won for a consumer product.

Each pivot preserved the domain knowledge we had accumulated (exercise catalog, biomechanics, UX patterns) while replacing the technical layer underneath. The hardest engineering you do might be the engineering you throw away.

2022 Q1

Sensor fusion & exercise detection R&D

2023 Q1

Fitizens 1.0, real demo with users

2024 Q3

1st Pivot: B2B to B2C

2025 Q4

2nd Pivot: AI-video technique analysis

2026 Q1

Fitizens 2.0

Fitizens 2.0: AI Video Coach

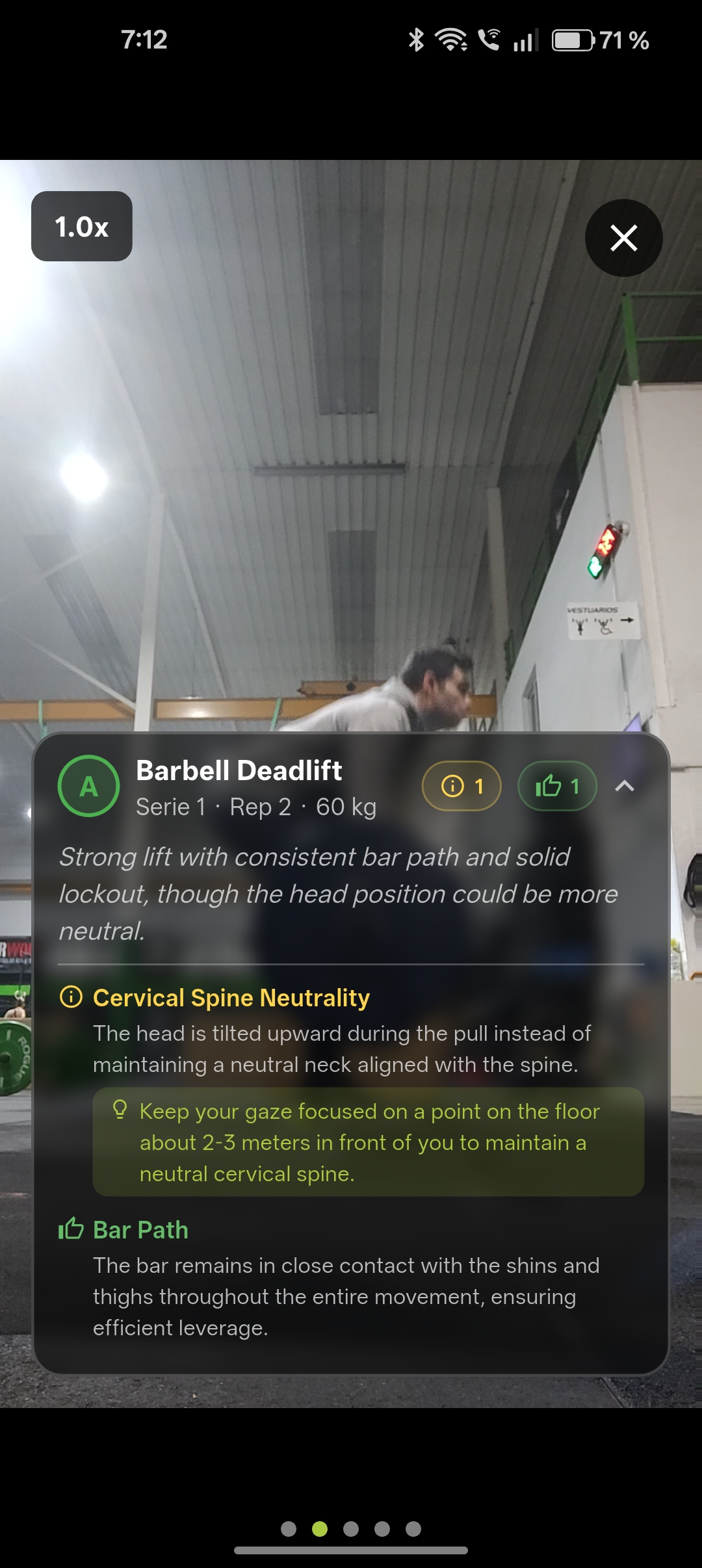

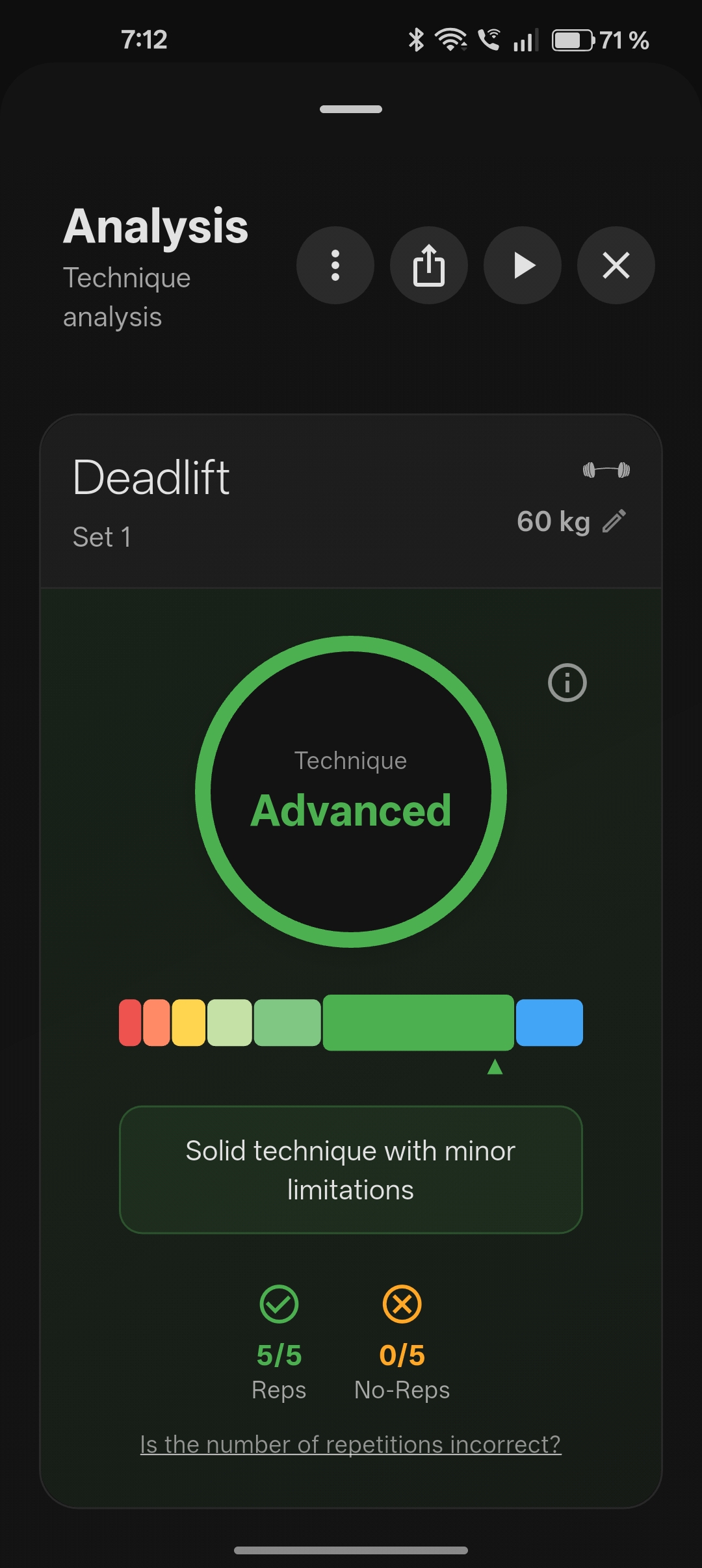

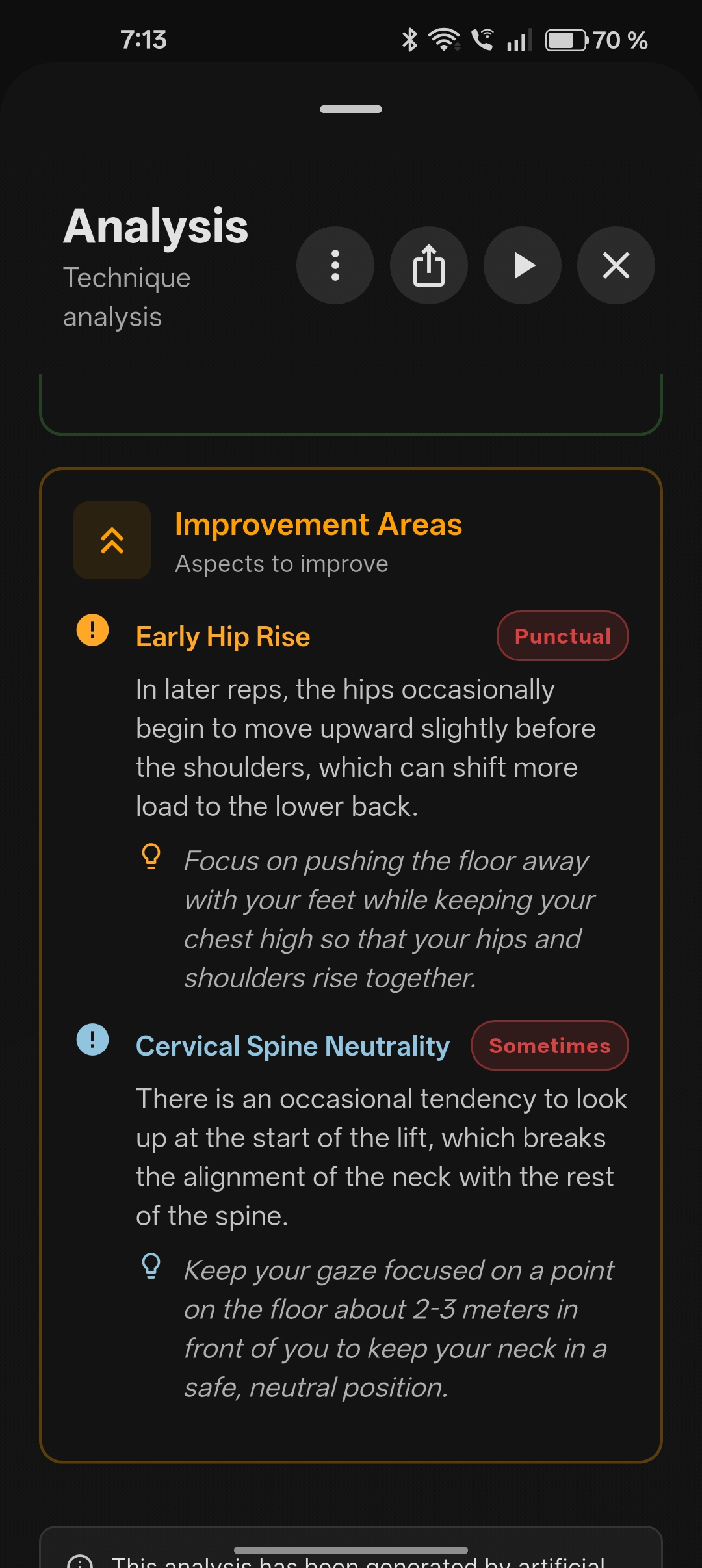

You open the app, pick an exercise, and record a video of your set. The AI watches every frame, counts your reps, scores each one, detects technique issues, and gives you specific feedback on what to improve. All from a phone camera, no extra hardware needed.

The feedback is not generic. Each exercise has its own biomechanical rubric: what good form looks like, what the common mistakes are, what makes a rep a no-rep. The AI debates itself internally before scoring, arguing both sides to reduce overconfidence.

We built a catalog of 542 exercises, each with its own rubric, muscle mappings, and quality criteria. The scoring system ranges from 'Excellent' to 'Unsafe', with specific coaching cues for every level.

We tested it with 200+ athletes across CrossFit boxes in Madrid, iterating on the product based on real training sessions and real feedback. The app is published on both the App Store and Google Play.

System Architecture

The stack covers everything from mobile to AI to infrastructure. The diagram below is a simplified view of how the main pieces connect. In practice, each box hides significant complexity: the AI pipeline alone has multiple specialized stages, the backend orchestrates Cloud Functions, and the data layer feeds schemas and exercise catalogs into nearly every service.

graph LR

App(fa:fa-mobile-screen-button Mobile App)

Web(fa:fa-globe Web App)

Cloud(fa:fa-cloud Backend)

AI(fa:fa-brain Multi-Stage LLM Video Analysis)

LLM(fa:fa-robot LLM Provider)

Pay(fa:fa-credit-card Payments)

Eval(fa:fa-chart-line Evaluation)

App -->|Video upload| Cloud

Web -->|Video upload| Cloud

Cloud -->|Triggers| AI

AI -->|Calls| LLM

AI -->|Results| Cloud

Cloud -->|Syncs| App

Pay <--> Cloud

AI <--> Eval

Mobile App: Fitizens Athlete

A Flutter app published on App Store and Google Play. Seven feature modules covering video recording, AI analysis, exercise catalog, session history, subscriptions, onboarding, and settings. On-device video processing before anything hits the cloud. Published on App Store and Google Play.

Cloud Backend

Everything that happens between the user pressing 'Analyze' and receiving their coaching report. Built entirely around Firebase: Cloud Functions in Python covering everything from triggering the AI pipeline to managing subscription lifecycles, processing payments across three platforms (Stripe, Apple IAP, Google Play), automating emails, and tracking analytics. Firestore stores all user and analysis data, Cloud Storage handles video uploads, and Firebase Auth manages authentication.

graph LR

Client(fa:fa-mobile-screen-button Client Apps)

Auth(fa:fa-lock Firebase Auth)

Storage(fa:fa-hard-drive Cloud Storage)

DB[(fa:fa-database Firestore)]

CF(fa:fa-bolt Cloud Functions)

Stripe(fa:fa-credit-card Stripe)

Apple(fa:fa-mobile-screen Apple IAP)

Google(fa:fa-mobile-screen Google Play)

Email(fa:fa-envelope Email)

AI(fa:fa-brain Multi-Stage LLM Video Analysis)

Client --> Auth

Client --> Storage

Client --> DB

Storage -->|Triggers| CF

CF -->|Writes| DB

Stripe -->|Webhooks| CF

Apple -->|Webhooks| CF

Google -->|Webhooks| CF

CF --> Email

CF --> AI

Multi-Stage LLM Video Analysis

A pipeline that processes exercise videos through multiple specialized steps. It detects the number of repetitions, then for each rep it analyzes execution technique, extracting detailed information about issues and strengths in the movement. The system produces structured reports with per-rep scoring, confidence levels, and bilingual coaching feedback.

LLM Evaluation Pipeline

How do you know your AI coach is actually good? We built a dedicated evaluation framework using 900+ real exercise videos from actual users. Every video has human-annotated ground truth, and the system automatically compares AI predictions against expert judgement, tracking accuracy across prompt versions and model updates.

Data Infrastructure

The real competitive advantage. Years of accumulated domain knowledge, codified into structured data that powers every layer of the product. A central repository of 50 JSON schemas serves as the single source of truth across all services, auto-generating typed Python models published to a private package registry.

What we built and collected:

- 527+ exercise catalog Bilingual descriptions, muscle group mappings, quality criteria, and biomechanical rubrics defining what good form looks like, common mistakes, and coaching cues for each exercise

- 900+ annotated exercise videos Real exercise videos from actual users for LLM evaluation

- 40,000+ labeled repetitions From professional trainers across 130 exercises (sensor era)

- Exercise detection datasets Raw accelerometer and gyroscope data, human-labeled in Label Studio

- 50 JSON schemas Defining every data contract across the system

- Auto-generated Python models Published to a private package registry

Fitizens 1.0: IMU-Based AI Coach

Before the camera pivot, the product ran on Bluetooth IMU sensors. We used Movesense devices to capture raw accelerometer and gyroscope signals during exercise execution. The system counted repetitions in real time and delivered millisecond-precision analytics. That era produced some of the most technically demanding work in the project.

IMU Hardware & Sensor Fusion

Movesense sensors attached to the chest using a chest strap, streaming 9-axis IMU data (accelerometer, gyroscope, magnetometer) over Bluetooth Low Energy at 104 Hz. We handled device discovery, pairing, connection lifecycle, and real-time data streaming from Dart.

We developed a C++ sensor fusion firmware (Madgwick AHRS algorithm, accessed from Dart via FFI) to convert raw IMU signals into orientation and acceleration processed signals, enabling the system to detect a human performing exercises in real time.

Exercise Detection & ML Operations

We developed 30 different deep learning models to detect over 130 exercises from IMU data, operating in real time. We collected over 40,000 repetitions from professional CrossFit trainers to train and validate the models. The models are Temporal Convolutional Networks, quantized to TensorFlow Lite for on-device inference under 5ms.

Behind the models, we built an automated pipeline for labelling, training, and deploying models directly to the device: ETL from Firebase to Label Studio for human annotation, experiment tracking with MLflow and Optuna for hyperparameter optimization, and deployment of quantized models to mobile devices.

By The Numbers

| Exercise catalog | 527+ |

| Cloud Functions | 14 |

| Deep learning models | 30 |

| Labeled repetitions | 40,000+ |

| Real users tested | 200+ |

| Platforms | iOS + Android |

| Team size | 2 co-founders |